How the RYYB sensor on the Huawei P30 Pro works

Update, 5 Sept 2022: Article has been updated to reflect that the main camera sensor is Sony IMX600y and not IMX650 as originally thought.

The Huawei P30 Pro is the first smartphone to use a custom Sony IMX600y sensor. Apart from having a resolution of 40MP, the special feature of this sensor is that it has an RYYB color filter. Huawei claims this makes the sensor collect 40% light. But what is an RYYB color filter and how does it differ from what other phones have?

To understand the RYYB filter, we must first understand how a Bayer filter works. A Bayer filter is a color filter array overlaid on top of an image sensor, and is named after Bryce Bayer, who invented it.

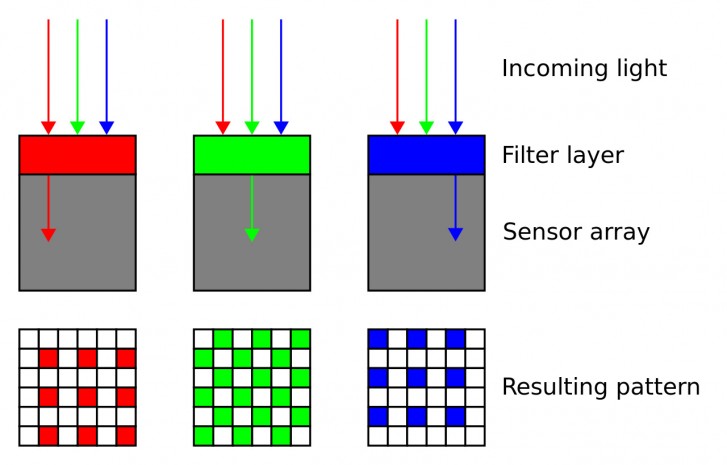

The reason we need a color filter array is because an image sensor, by design, is only light sensitive and not color sensitive. Since it can only detect and capture light, a raw image capture from a digital image sensor is always black and white.

To be able to capture color, we need a color filter array, such as the Bayer filter. First, the ultraviolet and infrared light is filtered out by other filters. Once the light coming is narrowed down to the visible spectrum, the Bayer filter gets to work.

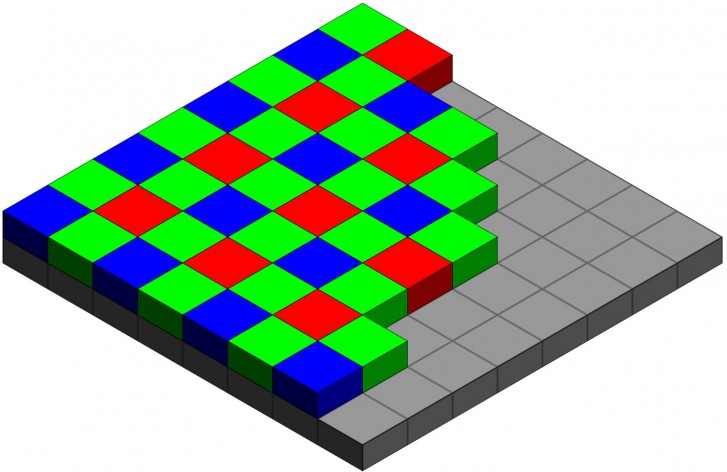

The Bayer filter overlays one colored dye on top of every pixel. So in a grid of 2x2 pixels, there will be one red pixel, one blue pixel and two green pixels.

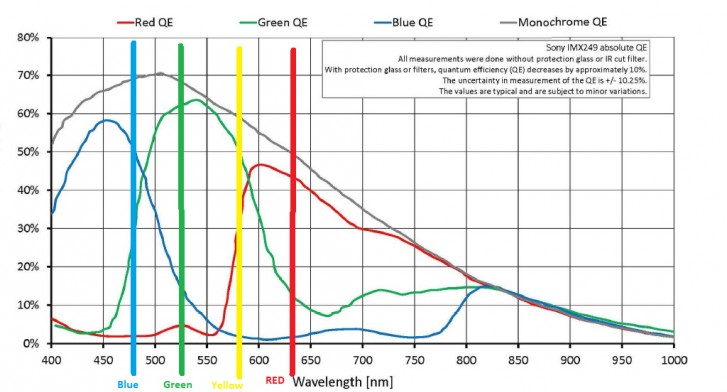

The choice to go with RGB has its own reasons. Human vision is trichromatic, which means it is sensitive primarily to three groups of wavelengths of light, which correspond closely to red, green and blue colors. Using the overlap between these wavelengths, our brain is able to interpret "colors" (which don't actually exist in reality) and along with the light information picked up by the rods we are able to see. Our cameras are designed to closely mimic our trichromatic vision system.

The Bayer filter places one red color, one blue color and two green colored lenses on a 2x2 grid of pixels on the image sensor. This means the visible light passing through the array is filtered out by the respective color. The pixel underneath the red lens will still get a black and white image but with the red color filtered out. This will result in all the red objects in the frame to appear brighter for that pixel, as that information is being taken out. The same goes for the blue and green pixels.

Now we have an image that has varying levels of luminosity per pixel due to the filters. To produce the actual colors, we need a process called demosaicing or debayering. A complex algorithm, usually proprietary to the manufacturer of the camera, helps interpret the different color values. It looks at the information captured by a pixel as well as the surrounding pixels and determines what color value to assign to that pixel. How good this algorithm is decides how accurate the colors in the final image are.

As an aside, the raw digital image from a sensor is always black and white. We only see colors because the image has been demosaiced. When we open a raw file in a photo editor, such as Adobe Photoshop, the application is using its own demosaicing algorithm to generate the colors, which is why different applications will produce slightly different colors for raw files.

Once the demosaicing is done, the image is then ready for further processing, where things such as exposure, contrast, highlights, shadows, white balance, noise, and sharpness are adjusted to produce the final image.

Coming back to the filter, the Bayer filter chooses to go with RGGB array. As mentioned before, it corresponds most closely to how our eyes work. As for why there are two green pixels, it's because green sits bang in the middle of the visible color spectrum for humans and our eyes are most sensitive to it. The green channel also acts as luminance and you'll notice that adjusting the green level in the image not just affects the color but also the overall brightness of the image.

However, alternative to the Bayer filters have been around for a while. CYM or CYYM is one of them, where the filter consists of cyan, yellow, and magenta colors. The advantage of the CYYM filter is that it allows more light to pass through to the sensor. As you can tell from the name, a Bayer filter is a filter, so it removes some of the light. On top of that, since only half the pixels on the sensor are getting green light and a quarter are getting red and blue light, you also are never getting the promised resolution of your sensor. A CYYM sensor, with its higher light transmission was designed to combat this and in theory produce much more resolution from the same number of pixels.

However, as it turns out, it's not easy to get natural looking images out of a CYYM sensor and it requires a much more complex demosaicing algorithm than for RGGB. Due to this, it never quite gained popularity and cameras with full CYYM sensors remain a rarity, with none being made in the recent past.

This is where the RYYB sensor on the Huawei P30 Pro comes in. The engineers have used the familiar red and blue channels but replaced the two green with two yellow channels. This allows the sensor to capture even more light than with the green filters, at least in theory.

Of course, there is still the issue of demosaicing but with the improvement in that area as well as with the use of artificial intelligence that analyzes the contents of the image, it is now possible to correctly determine colors even without a dedicated green channel. Indeed, in our reviews, we saw no funny business going on with the colors from the P30 Pro.

Of course, the effectiveness of this method over standard RGGB cannot be easily tested or verified, so we have to take Huawei's word for it. But it is certainly an interesting solution to improve low light image quality and is possibly the reason why the phone has such excellent low light performance.

But that's pretty much it for this discussion. If you have any questions or want us to discuss any other points in future, then let us know in the comments below.

Related

Reader comments

- Avalon

- 05 Nov 2020

- m@R

Was red pixel wavelength changed because yellow is too close to red? They could have made RGYB or even better RGBW or separate monochrome camera. Graphene CMOS are x10 more sentivite.

- dimz

- 27 Jun 2020

- 0Vd

Yellow light wavelength is brighter than green, therefore a sensor/filter combo favouring it captures more light.

- Lookasso

- 14 Oct 2019

- 3h8

After xperia with totally unusable display just because of a tiny crack, while with all other brands I've tried the phone can still be used with a totally cracked display, I'm never going to buy again xperia no matter what.

Samsung

Samsung Xiaomi

Xiaomi Samsung

Samsung Sony

Sony OnePlus

OnePlus