Samsung creates RAM with integrated AI processing hardware

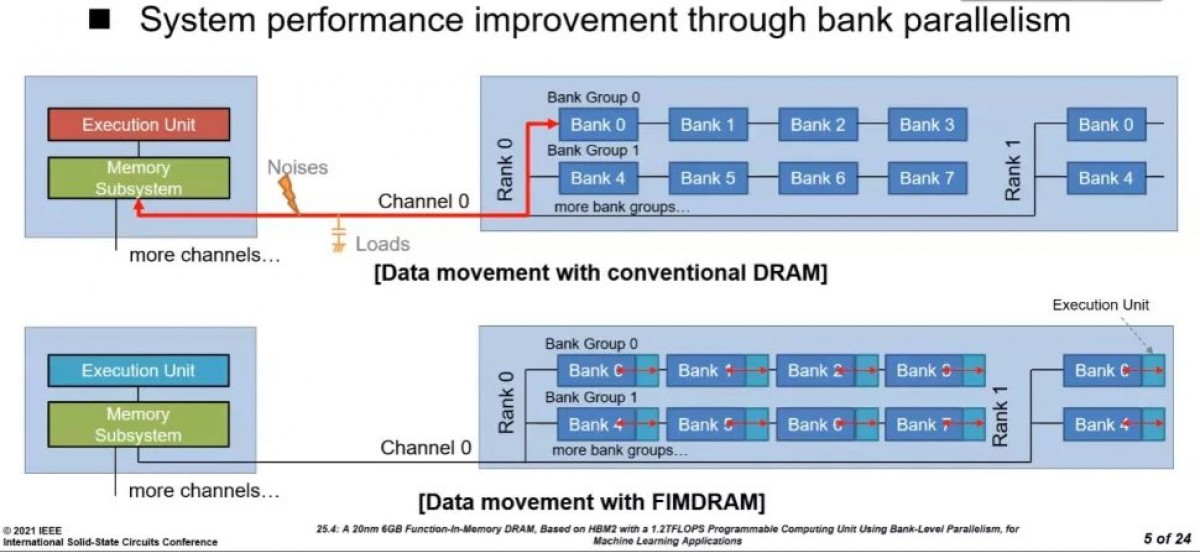

A processing unit (CPU, GPU or whatever) and RAM are typically separate things built on separate chips. But what if they were part of the same chip, all mixed together? That’s exactly what Samsung did to create the world’s first High Bandwidth Memory (HBM) with built-in AI processing hardware called HBM-PIM (for processing-in-memory).

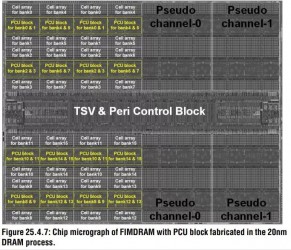

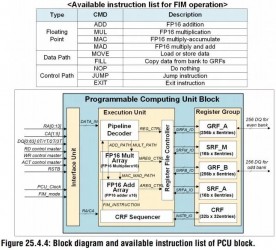

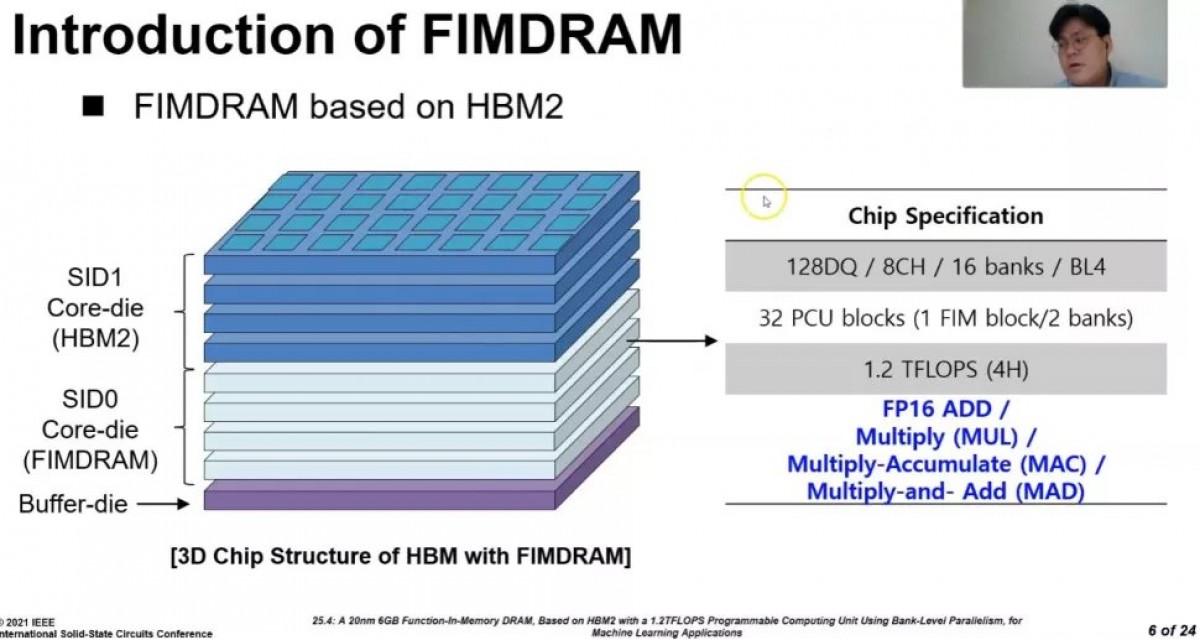

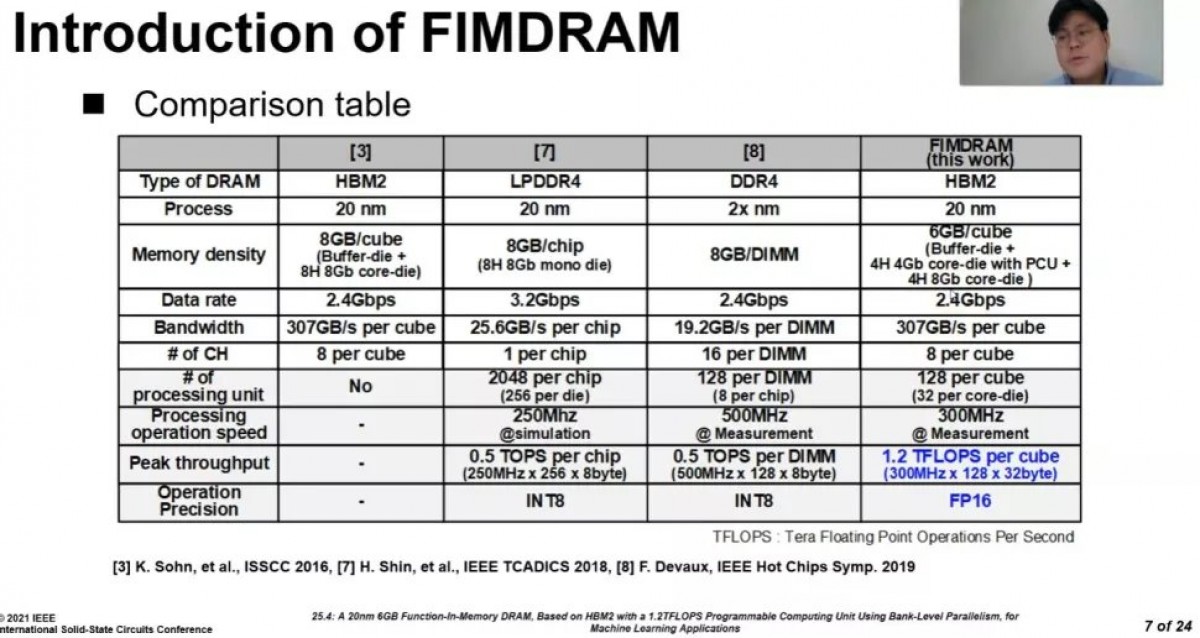

It took its HBM2 Aquabolt chips and added Programmable Computing Units (PCU) between the memory banks. These are relatively simple and operate on 16-bit floating point values with a limited instruction set – they can move data around and perform multiplications and additions.

PCUs mixed in with the memory banks • The PCU is a very limited FP16 processor

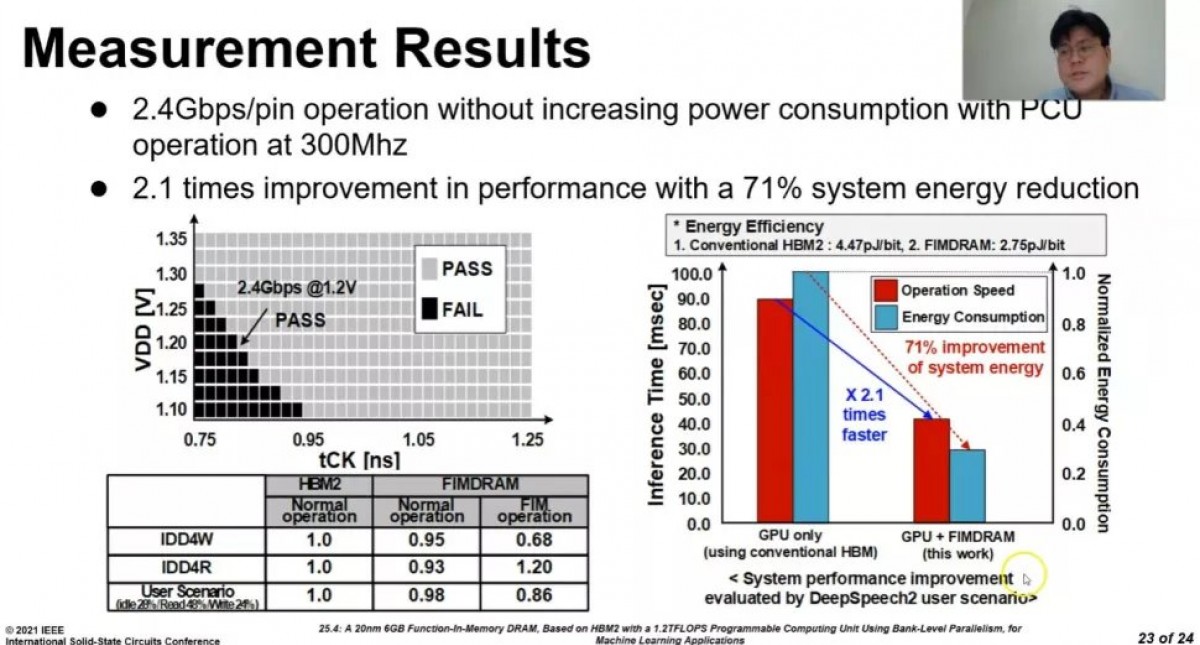

But there are many PCUs and they literally sit next to the data they are working on. Samsung managed to get the PCUs working at 300 MHz, which works out to 1.2 TFLOPS processing power per chip. And it kept the power usage (per chip) the same while transferring data at 2.4 Gbps per pin.

Per-chip power usage may be the same, but overall system energy consumption drops by 71%. This is because a typical CPU would need to move data twice – read the input then write the result. With HBM-PIM the data doesn’t really go anywhere.

It’s not just power saving, using PIM for machine learning and inference tasks researchers saw system performance more than double. That’s a win-win situation.

The HBM-PIM design is backwards compatible with regular HBM2 chips, so no new hardware needs to be developed – the software just needs to tell the PIM system to switch from regular mode to in-memory processing mode.

There is one issue with this and it is the PCUs take up space previously occupied by memory banks. This cuts the total capacity in half – down to 4 gigabits. Samsung decided to split the difference and combine 4 gigabit PIM chips with 8 gigabit regular HBM2 dies. Using four of each it created 6 gigabyte stacks.

There’s some more bad news – it will be a while before HBM-PIM lands in consumer hardware. For now Samsung has sent out chips to be tested by partners developing AI accelerators and expects the design to be validated by July.

HBM-PIM will be presented at the International Solid-State Circuits Virtual Conference this week, so we can expect more details then.

Related

Reader comments

- AnonD-731363

- 19 Feb 2021

- Lfw

Soon we end as a Terminatior movie or a I Robot movie where machines will replace us everywhere.

- Jose

- 18 Feb 2021

- DkP

Did'nt Mitsubishi 3d ram on Evans and Sutherland image generators have the same functions?...

Xiaomi

Xiaomi Samsung

Samsung Sony

Sony Samsung

Samsung vivo

vivo